How do you stability safety and velocity in giant groups? This query surfaced throughout my current work with a buyer that had greater than 10 groups utilizing a Scaled Agile Framework (SAFe), which is an agile software program improvement methodology. In aiming for correctness and safety of product, in addition to for improvement pace, groups confronted pressure of their aims. One such occasion concerned the event of a continuous-integration (CI) pipeline. Builders wished to develop options and deploy to manufacturing, deferring non-critical bugs as technical debt, whereas cyber engineers wished compliant software program by having the pipeline fail on any safety requirement that was not met. On this weblog put up, I discover how our group managed—and ultimately resolved—the 2 competing forces of developer velocity and cybersecurity enforcement by implementing DevSecOps practices .

Originally of the undertaking, I noticed that the pace of growing new options was of highest priority: every unit of labor was assigned factors based mostly on the variety of days it took to complete, and factors have been tracked weekly by product house owners. To perform the unit of labor by the deadline, builders made tradeoffs in deferring sure software-design choices as backlog points or technical debt to push options into manufacturing. Cyber operators, nevertheless, sought full compliance of the software program with the undertaking’s safety insurance policies earlier than it was pushed to manufacturing. These operators, as a earlier put up defined, sought to implement a DevSecOps precept of alerting “somebody to an issue as early within the automated-delivery course of as doable in order that that particular person [could] intervene and resolve the problems with the automated processes.” These conflicting aims have been generally resolved by both sacrificing developer velocity in favor of security-policy enforcement or bypassing safety insurance policies to allow sooner improvement.

Along with sustaining velocity and safety, there have been different minor hurdles that contributed to the issue of balancing developer velocity with cybersecurity enforcement. The shopper had builders with various levels of expertise in secure-coding practices. Numerous safety instruments have been accessible however not incessantly used since they have been behind separate portals with totally different passwords and insurance policies. Employees turnover was such that staff who left didn’t share the data with new hires, which brought on gaps within the understanding of sure software program programs, thereby elevated the chance in deploying new software program. I labored with the client to develop two methods to treatment these issues: adoption of DevSecOps practices and instruments that applied cyber insurance policies in an automatic approach.

Adopting DevSecOps

A steady integration pipeline had been partly applied earlier than I joined the undertaking. It included a pipeline with some automated exams in place. Deployment was a handbook course of, tasks had various implementations of exams, and assessment of safety practices was deferred as a job merchandise simply earlier than a serious launch. Till not too long ago, the group relied on builders to have secure-coding experience, however there was no option to implement this on the codebase apart from by means of peer assessment. Some automated instruments have been accessible for developer use, however they required logging in to an exterior portal and working exams manually there, so these instruments have been used sometimes. Automating the enforcement mechanism for safety insurance policies (following the DevSecOps mannequin) shortened the suggestions loop that builders acquired after working their builds, which allowed for extra fast, iterative improvement. Our group created a typical template that might be simply shared amongst all groups so it might be included as a part of their automated builds.

The usual template prescribed the exams that applied this system’s cyber coverage. Every coverage corresponded to a person check, which ran each time a code contributor pushed to the codebase. These exams included the next:

- Container scanning—Since containers have been used to package deal and deploy purposes, it was essential to find out whether or not any layers of the imported picture had current safety vulnerabilities.

- Static software testing—The sort of testing helped stop pushing code with excessive cyclomatic complexity and was susceptible to buffer-overflow assaults, or different frequent programming errors that introduce vulnerabilities.

- Dependency scanning— After the Photo voltaic Winds assault, higher emphasis has been placed on securing the software program provide chain. Dependency scanning seems at imported libraires to detect any current vulnerabilities in them.

- Secret detection—A check that alerts builders of any token, credentials, or passwords they might have launched into the codebase, thereby compromising the safety of the undertaking.

There are a number of benefits to having a person coverage run on separate phases, which return to historic finest practices in software program engineering, e.g., expressed within the Unix philosophy, agile software program methodologies, and many seminal works. These embrace modularity, chaining, and customary interfaces:

- Particular person phases on a pipeline executing a singular coverage present modularity so that every coverage may be developed, modified, and expanded on with out affecting different phases (the time period “orthogonality” is typically used). This modularity is a key attribute in enabling refactoring.

- Particular person phases additionally permit for chaining workflows, whereby a stage that produces an artifact can absorb that artifact as its enter and produce a brand new output. This sample is clearly seen in Unix packages based mostly on pipes and filters, the place a program takes the output of one other program as its enter and create new workflows thereafter.

- Making every coverage into its personal stage additionally permits for clear distinction of software program layers by means of customary interfaces, the place a safety operator might have a look at a stage, see if it handed, and maybe change a configuration file with out having to delve into the internals of the software program implementing the stage.

These three key attributes resolved the problem of getting a number of group members coding and refactoring safety insurance policies with no lengthy onboarding course of. It meant safety scans have been at all times run as a part of the construct course of and builders didn’t have to recollect to go to totally different portals and execute on-demand scans. The method additionally opened up the chance for chaining phases for the reason that artifact of 1 job might be handed on to the following.

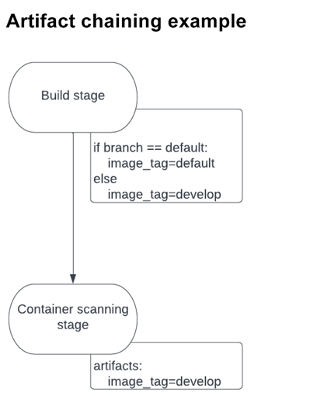

In a single occasion, a construct job created a picture tag that modified relying on the type of department on which it was being deployed. The tag was saved as an artifact and handed alongside to the following stage: container scanning. This stage required the right picture tag to carry out the scanning. If the flawed tag was supplied, the job would fail. For the reason that tag identify might change relying on the construct job, it couldn’t work as a worldwide variable. By passing the tag alongside as an artifact, nevertheless, the container-scanning stage was assured to make use of the proper tag. You’ll be able to see a diagram of this move beneath:

Declarative Safety Insurance policies

In sure conditions, there are a number of benefits to utilizing declarative moderately than crucial coding practices. As an alternative of figuring out how one thing is applied, declarative expressions present the what. Through the use of industrial instruments we are able to specify a configuration file with the favored YAML language. The pipeline takes care of working the builds whereas the configuration file signifies what check to run (with what parameters). On this approach, builders don’t have to fret concerning the specifics of how the pipeline works however solely concerning the exams they want to run, which corresponds with the modularity, chaining, and interface attributes described beforehand. An instance stage is proven beneath:

container_scanning:

docker_img: example-registry.com/my-project:newest

embrace:

- container_scanning.yaml

The file defines a container_scanning stage, which scans a Docker picture and determines whether or not there are any identified vulnerabilities for it (by means of using open-source vulnerability trackers). The Docker picture is outlined within the stage, which may be a picture in a neighborhood or distant repository. The precise particulars of how the container_scanning stage works is within the container_scanning.yaml file. By abstracting the performance of this stage away from the principle configuration file, we make the configuration modular, chainable, and simpler to grasp—conforming to the ideas beforehand mentioned.

Rollout and Learnings

We examined our DevSecOps implementation by having two groups use the template of their tasks and check whether or not safety artifacts have been being generated as anticipated. From this preliminary batch, we discovered that (1) this customary template method labored and (2) groups might independently take the template and make minor changes to their tasks as essential. We subsequent rolled out the template for the remainder of the groups to implement of their tasks.

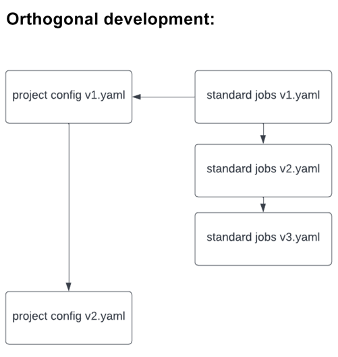

After we rolled out the template to all groups, I spotted that any adjustments to the template meant that each group must implement the adjustments themselves, which incurred inefficient and pointless work (on high of the options that groups have been working to develop). To keep away from this additional work, the usual safety template might be included as a dependency on their very own undertaking template (like code libraries are imported on information) utilizing Yaml’s embrace command. This method allowed builders to move down project-specific configurations as variables, which might be dealt with by the template. It additionally allowed these growing the usual template to make essential adjustments in an orthogonal approach, as beneath:

End result: A Higher Understanding of Safety Vulnerabilities

The implementation of DevSecOps ideas into the pipeline enabled groups to have a greater understanding of their safety vulnerabilities, with guards in place to mechanically implement cyber coverage. The automation of coverage enabled a fast suggestions loop for builders, which maintained their velocity and elevated the compliance of written code. New members of the group rapidly picked up on creating safe code by reusing the usual template, with out having to know the internals of how these jobs work, because of the interface that abstracts away pointless implementation particulars. Velocity and safety have been subsequently utilized in an efficient method to a DevSecOps pipeline in a approach that scales to a number of groups.