Amazon Redshift is a quick, absolutely managed cloud knowledge warehouse that makes it easy and cost-effective to research all of your knowledge utilizing normal SQL and your present enterprise intelligence (BI) instruments. Amazon Redshift knowledge sharing permits for a safe and simple approach to share reside knowledge for studying throughout Amazon Redshift clusters. It permits an Amazon Redshift producer cluster to share objects with a number of Amazon Redshift shopper clusters for learn functions with out having to repeat the information. With this strategy, workloads remoted to totally different clusters can share and collaborate ceaselessly on knowledge to drive innovation and supply value-added analytic providers to your inner and exterior stakeholders. You possibly can share knowledge at many ranges, together with databases, schemas, tables, views, columns, and user-defined SQL capabilities, to supply fine-grained entry controls that may be tailor-made for various customers and companies that each one want entry to Amazon Redshift knowledge. The function itself is straightforward to use and combine into present BI instruments.

On this submit, we focus on Amazon Redshift knowledge sharing, together with some finest practices and concerns.

How does Amazon Redshift knowledge sharing work ?

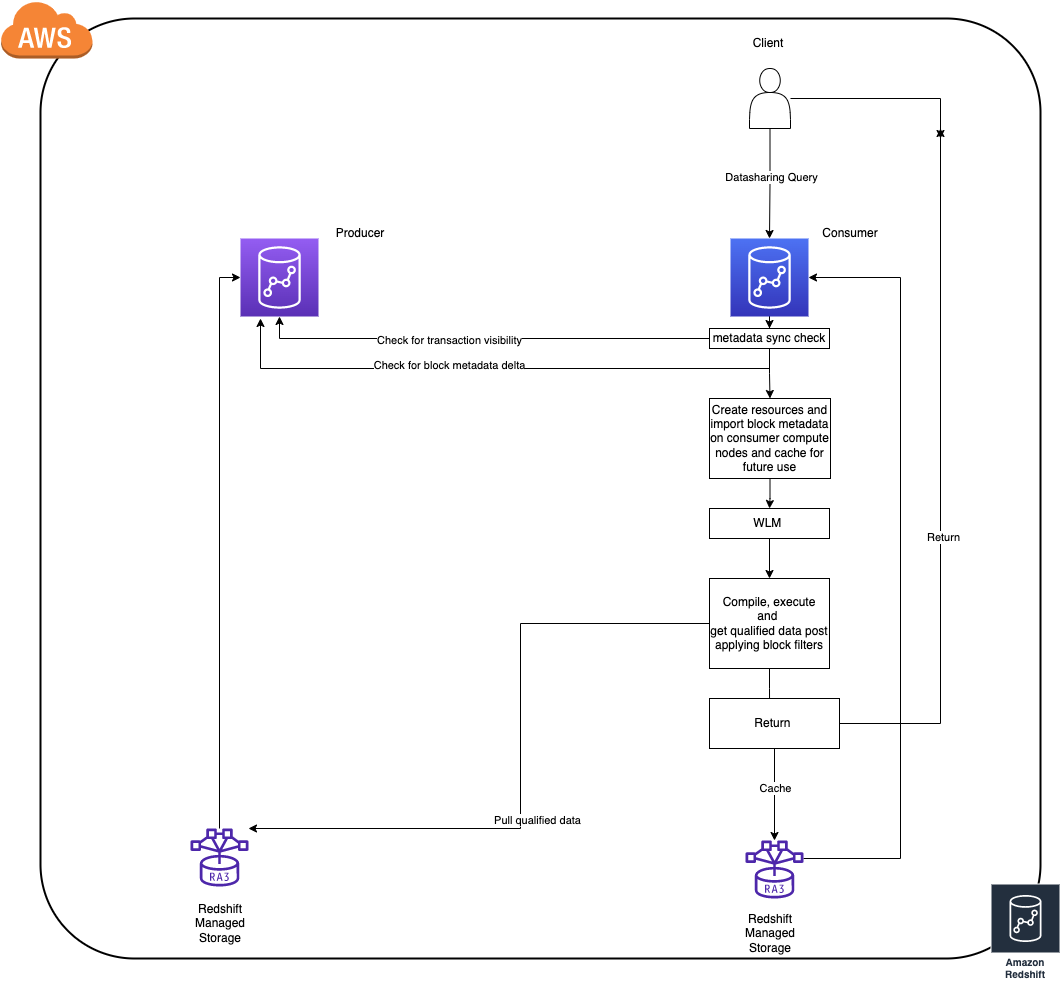

- To attain finest in school efficiency Amazon Redshift shopper clusters cache and incrementally replace block stage knowledge (allow us to confer with this as block metadata) of objects which might be queried, from the producer cluster (this works even when cluster is paused).

- The time taken for caching block metadata relies on the speed of the information change on the producer for the reason that respective object(s) have been final queried on the patron. (As of right this moment the patron clusters solely replace their metadata cache for an object solely on demand i.e. when queried)

- If there are frequent DDL operations, the patron is pressured to re-cache the total block metadata for an object throughout the subsequent entry to keep up consistency as to allow reside sharing as construction adjustments on the producer invalidate all the present metadata cache on the shoppers.

- As soon as the patron has the block metadata in sync with the newest state of an object on the producer that’s when the question would execute as every other common question (question referring to native objects).

Now that now we have the required background on knowledge sharing and the way it works, let’s take a look at just a few finest practices throughout streams that may assist enhance workloads whereas utilizing knowledge sharing.

Safety

On this part, we share some finest practices for safety when utilizing Amazon Redshift knowledge sharing.

Use INCLUDE NEW cautiously

INCLUDE NEW is a really helpful setting whereas including a schema to an information share (ALTER DATASHARE). If set to TRUE, this robotically provides all of the objects created sooner or later within the specified schema to the information share robotically. This won’t be preferrred in circumstances the place you need to have fine-grained management on objects being shared. In these circumstances, go away the setting at its default of FALSE.

Use views to realize fine-grained entry management

To attain fine-grained entry management for knowledge sharing, you possibly can create late-binding views or materialized views on shared objects on the patron, after which share the entry to those views to customers on shopper cluster, as a substitute of giving full entry on the unique shared objects. This comes with its personal set of concerns, which we clarify later on this submit.

Audit knowledge share utilization and adjustments

Amazon Redshift gives an environment friendly approach to audit all of the exercise and adjustments with respect to an information share utilizing system views. We are able to use the next views to verify these particulars:

Efficiency

On this part, we focus on finest practices associated to efficiency.

Materialized views in knowledge sharing environments

Materialized views (MVs) present a strong path to precompute complicated aggregations to be used circumstances the place excessive throughput is required, and you may instantly share a materialized view object through knowledge sharing as effectively.

For materialized views constructed on tables the place there are frequent write operations, it’s preferrred to create the materialized view object on the producer itself and share the view. This technique provides us the chance to centralize the administration of the view on the producer cluster itself.

For slowly altering knowledge tables, you possibly can share the desk objects instantly and construct the materialized view on the shared objects instantly on the patron. This technique provides us the pliability of making a custom-made view of information on every shopper in keeping with your use case.

This may help optimize the block metadata obtain and caching occasions within the knowledge sharing question lifecycle. This additionally helps in materialized view refreshes as a result of, as of this writing, Redshift doesn’t help incremental refresh for MVs constructed on shared objects.

Components to contemplate when utilizing cross-Area knowledge sharing

Information sharing is supported even when the producer and shopper are in several Areas. There are just a few variations we have to contemplate whereas implementing a share throughout Areas:

- Client knowledge reads are charged at $5/TB for cross area knowledge shares, Information sharing throughout the similar Area is free. For extra data, confer with Managing value management for cross-Area knowledge sharing.

- Efficiency will even differ when in comparison with a uni-Regional knowledge share as a result of the block metadata trade and knowledge switch course of between the cross-Regional shared clusters will take extra time as a consequence of community throughput.

Metadata entry

There are a lot of system views that assist with fetching the listing of shared objects a consumer has entry to. A few of these embrace all of the objects from the database that you simply’re at present linked to, together with objects from all the opposite databases that you’ve entry to on the cluster, together with exterior objects. The views are as follows:

We recommend utilizing very restrictive filtering whereas querying these views as a result of a easy choose * will end in a whole catalog learn, which isn’t preferrred. For instance, take the next question:

This question will attempt to acquire metadata for all of the shared and native objects, making it very heavy when it comes to metadata scans, particularly for shared objects.

The next is a greater question for attaining the same outcome:

This can be a good apply to observe for all metadata views and tables; doing so permits seamless integration into a number of instruments. You can too use the SVV_DATASHARE* system views to solely see shared object-related data.

Producer/shopper dependencies

On this part, we focus on the dependencies between the producer and shopper.

Affect of the patron on the producer

Queries on the patron cluster can have no impression when it comes to efficiency or exercise on the producer cluster. This is the reason we will obtain true workload isolation utilizing knowledge sharing.

Encrypted producers and shoppers

Information sharing seamlessly integrates even when each the producer and the patron are encrypted utilizing totally different AWS Key Administration Service (AWS KMS) keys. There are subtle, extremely safe key trade protocols to facilitate this so that you don’t have to fret about encryption at relaxation and different compliance dependencies. The one factor to ensure is that each the producer and shopper are in a homogeneous encryption configuration.

Information visibility and consistency

An information sharing question on the patron can’t impression the transaction semantics on the producer. All of the queries involving shared objects on the patron cluster observe read-committed transaction consistency whereas checking for seen knowledge for that transaction.

Upkeep

If there’s a scheduled guide VACUUM operation in use for upkeep actions on the producer cluster on shared objects, you need to use VACUUM recluster every time doable. That is particularly essential for giant objects as a result of it has optimizations when it comes to the variety of knowledge blocks the utility interacts with, which leads to much less block metadata churn in comparison with a full vacuum. This advantages the information sharing workloads by lowering the block metadata sync occasions.

Add-ons

On this part, we focus on extra add-on options for knowledge sharing in Amazon Redshift.

Actual-time knowledge analytics utilizing Amazon Redshift streaming knowledge

Amazon Redshift lately introduced the preview for streaming ingestion utilizing Amazon Kinesis Information Streams. This eliminates the necessity for staging the information and helps obtain low-latency knowledge entry. The info generated through streaming on the Amazon Redshift cluster is uncovered utilizing a materialized view. You possibly can share this as every other materialized view through a knowledge share and use it to arrange low-latency shared knowledge entry throughout clusters in minutes.

Amazon Redshift concurrency scaling to enhance throughput

Amazon Redshift knowledge sharing queries can make the most of concurrency scaling to enhance the general throughput of the cluster. You possibly can allow concurrency scaling on the patron cluster for queues the place you anticipate a heavy workload to enhance the general throughput when the cluster is experiencing heavy load.

For extra details about concurrency scaling, confer with Information sharing concerns in Amazon Redshift.

Amazon Redshift Serverless

Amazon Redshift Serverless clusters are prepared for knowledge sharing out of the field. A serverless cluster can even act as a producer or a shopper for a provisioned cluster. The next are the supported permutations with Redshift Serverless:

- Serverless (producer) and provisioned (shopper)

- Serverless (producer) and serverless (shopper)

- Serverless (shopper) and provisioned (producer)

Conclusion

Amazon Redshift knowledge sharing provides you the flexibility to fan out and scale complicated workloads with out worrying about workload isolation. Nonetheless, like every system, not having the precise optimization strategies in place may pose complicated challenges in the long run because the programs develop in scale. Incorporating the very best practices listed on this submit presents a approach to mitigate potential efficiency bottlenecks proactively in numerous areas.

Attempt knowledge sharing right this moment to unlock the total potential of Amazon Redshift, and please don’t hesitate to attain out to us in case of additional questions or clarifications.

In regards to the authors

BP Yau is a Sr Product Supervisor at AWS. He’s enthusiastic about serving to clients architect huge knowledge options to course of knowledge at scale. Earlier than AWS, he helped Amazon.com Provide Chain Optimization Applied sciences migrate its Oracle knowledge warehouse to Amazon Redshift and construct its subsequent technology huge knowledge analytics platform utilizing AWS applied sciences.

BP Yau is a Sr Product Supervisor at AWS. He’s enthusiastic about serving to clients architect huge knowledge options to course of knowledge at scale. Earlier than AWS, he helped Amazon.com Provide Chain Optimization Applied sciences migrate its Oracle knowledge warehouse to Amazon Redshift and construct its subsequent technology huge knowledge analytics platform utilizing AWS applied sciences.

Sai Teja Boddapati is a Database Engineer primarily based out of Seattle. He works on fixing complicated database issues to contribute to constructing probably the most consumer pleasant knowledge warehouse out there. In his spare time, he loves travelling, enjoying video games and watching films & documentaries.

Sai Teja Boddapati is a Database Engineer primarily based out of Seattle. He works on fixing complicated database issues to contribute to constructing probably the most consumer pleasant knowledge warehouse out there. In his spare time, he loves travelling, enjoying video games and watching films & documentaries.